Model AV testing

Two Washington University faculty members and their research teams build the “WashU Mini-City” — a novel and low-cost physical environment — to study autonomous vehicles and, ultimately, to improve their reliability and safety

On a darkened street in Tempe, Arizona, in March of 2018, Elaine Herzberg was crossing a street while walking her bicycle. An Uber SUV in autonomous driving mode struck and killed her. The Guardian reported that the Uber vehicle detected Hertzberg more than five seconds before the crash, but it could not identify her as pedestrian, and the backup driver was not paying attention to the road. It was the first reported instance of a pedestrian being killed by a self-driving vehicle.

Autonomous vehicles (AVs), cars that can drive themselves without human control, are taking to streets and highways across the country with increasing frequency. The McKinsey & Company consulting firm estimates that cars with self-driving technology could constitute 20% of auto sales by 2030, and 57% by 2035, but the record of the new technology is questionable.

Since Herzberg’s death, accidents caused by autonomously driven vehicles have continued to make headlines, and AAA reports that fear of self-driving cars rose markedly last year. Definitive numbers are hard to come by, due to the method by which the National Highway Traffic Safety Administration handles statistics on AV accidents, but in June the Washington Post reported that Tesla’s Autopilot self-driving system has been involved in 736 crashes and 17 deaths since 2019.

Proponents argue that their data show that AVs are safer than human drivers overall, but a recent detailed review by Ars Technica, an online publication that covers technology, concluded it is too early to say. It is clear, though, that the artificial intelligence driving AVs can be prone to error in novel situations. AI algorithms may be able to beat grandmasters at chess, but they are devoid of, for lack of a better word, common sense.

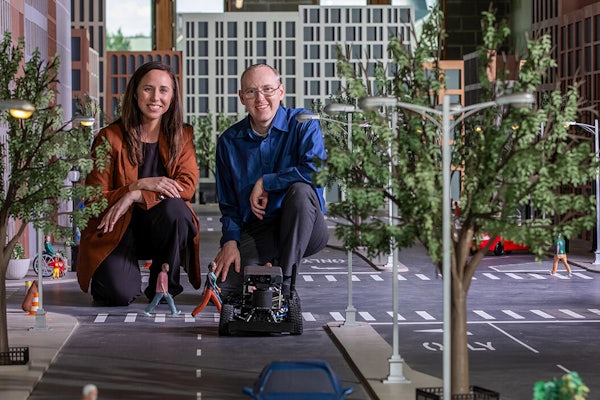

As scrutiny of autonomous vehicles increases, researchers at Washington University in St. Louis are puzzling out the best ways to improve their safety. What if, asks computer engineer Yevgeniy (Eugene) Vorobeychik and architect Constance Vale, you could build a scale model of an urban neighborhood to test self-driving cars, in an environment that you could customize to recreate the kinds of situations that cause problems for autonomous vehicles, all without endangering people or property? The two and their research teams are doing just that in the Architectural Design of an Experimental Platform for Autonomous Driving project — the Washington University Miniature City (WashU Mini-City), constructed in McKelvey Hall.

The goal of WashU Mini-City is to provide a physical autonomous driving platform that combines computer science and architecture to allow novel experimentation in a safe environment — at a low cost. The mini-city aims to be a laboratory for broader stress-testing and monitoring of AVs, ultimately increasing their safety and reliability. The project is funded by seed grants from WashU’s Office of the Vice Chancellor for Research and Provost’s Office, as well as the National Science Foundation.

Read the full story here.